Can AI Health Conversations Serve as a New Mediator of Health Behavior Change?

Article information

Abstract

Health conversations with artificial intelligence (AI) chatbots are rapidly increasing; 17% of US adults consult AI chatbots about health monthly, and the figure reaches 25% among those under 30. In Korea, generative AI use reached 33.3% in 2024, nearly doubling from the previous year. Yet academic discourse has largely focused on information accuracy and patient safety. I propose reframing AI health conversations as a potential mediator of health behavior change. Drawing on systematic reviews showing that AI chatbots can support physical activity and smoking cessation, I connect these findings to the transtheoretical model processes of change and the health belief model construct of self-efficacy. I describe the “information-to-action question shift” as a mechanism that may drive the transition from contemplation to action, and discuss intersections with Korea’s Fifth National Health Plan (HP2030) health literacy goals.

INTRODUCTION

In June 2024, a Kaiser Family Foundation survey of 2,428 US adults found that 17% had consulted artificial intelligence (AI) chatbots about health-related matters at least once a month, with this proportion reaching 25% among those under 30 [1]. Korea is no exception. The Ministry of Science and ICT’s 2024 internet usage survey reported that AI service use in Korea reached 60.3%, and generative AI use was 33.3%—nearly double the 17.6% of the previous year—with over 80% of adults in their 20s and 30s having tried such services [2]. The Korea Information Society Development Institute reported AI chatbot use at 13.4% in its 2023 Korean media panel survey, mostly for information searching [3]. The pattern is clear: AI health conversations are no longer confined to a small group of technology adopters. They are becoming mainstream.

Yet academic discourse on this phenomenon has remained within two frames: the accuracy of health information that AI provides and its impact on the physician–patient relationship. A different question deserves attention—one that the health promotion field has largely overlooked. Can AI health conversations serve as a mediator that moves consumers toward health behavior change? We explore this question through the lens of health promotion theory.

MAIN BODY

AI chatbots and health behavior change: existing evidence

Evidence on the behavior change effects of AI chatbot interventions is accumulating. Aggarwal et al. [4], in a systematic review of 15 studies published in Journal of Medical Internet Research, found that AI chatbots were effective in promoting healthy lifestyles (40%), smoking cessation (27%), and treatment adherence (20%). Singh et al. [5] extended this work with a meta-analysis of 19 studies in NPJ Digital Medicine, showing that chatbot interventions significantly increased total physical activity (standardized mean difference [SMD]=0.28; 95% confidence interval [CI], 0.16–0.40), moderate-to-vigorous physical activity (SMD=0.53; 95% CI, 0.24–0.83), and fruit and vegetable intake (SMD=0.59; 95% CI, 0.25–0.93). A more recent systematic review of natural language processing-based chatbot interventions confirmed consistent effects on smoking cessation, with users rating chatbots as useful and trustworthy.

An important caveat applies: these studies examined pre-designed, rule-based, or task-specific chatbots—not the general-purpose large language model (LLM)-based conversations that consumers now use freely. Direct comparison is difficult. Still, general-purpose LLMs may offer greater natural language flexibility, context maintenance, and personalization than earlier rule-based chatbots, so the behavior change effects identified in existing research may well be maintained or even amplified. At minimum, the available evidence suggests that conversational interaction with AI can function as a mediator of health behavior change.

Theoretical mechanisms: how AI conversations drive behavior change

Can the behavior change effects of AI chatbots be explained by health promotion theory? We attempt to connect them with two established models.

Transtheoretical model and the processes of change

The transtheoretical model (TTM) of Prochaska and DiClemente [6] explains behavior change through five stages—precontemplation, contemplation, preparation, action, and maintenance—and proposes 10 processes of change that enable transitions between stages.

AI health conversations connect with at least three of these processes. Consider “consciousness raising.” When a user tells an AI chatbot, “I’ve been feeling tired lately,” the chatbot responds with follow-up questions about sleep duration, dietary habits, and stress levels, thereby sharpening awareness of one’s own condition. Repeated conversations may then trigger “self-reevaluation”—a cognitive shift such as “I thought I was fairly healthy, but I actually have multiple risk factors.” And when an AI chatbot presents specific, achievable behavioral options—“the smallest action you can take within 10 minutes after leaving work”—it may strengthen behavioral commitment, a process that aligns with “self-liberation.”

A noteworthy phenomenon is the “information-to-action question shift” I observed in AI health conversations. In one case involving a worker in their 40s (pseudonym), approximately 200 health questions were posed over three months (“What foods are good for fatty liver?” “What medication should I take for high triglycerides?”), with no change in weight. But when the questions shifted toward action—“Could I take a 10-minute walk on the way to the convenience store after work?”—walking behavior was initiated (author’s observation, unpublished). In TTM terms, this corresponds to a transition from the contemplation stage (information gathering) to the preparation-action stage (execution planning). The shift bears conceptual similarity to the process in motivational interviewing where change talk develops into commitment language, although it has not been systematically verified in the AI conversation context. Because this is a single observational case, caution is warranted in generalizing; large-scale empirical verification is needed.

Health belief model and self-efficacy

The health belief model (HBM) explains health behavior through six constructs: perceived susceptibility, perceived severity, perceived benefits, perceived barriers, cues to action, and self-efficacy [7]. AI health conversations are likely to operate most strongly on two of these: self-efficacy and cues to action.

AI conversations can reinforce self-efficacy by strengthening the perception that “I can manage this.” When an AI chatbot proposes gradual, personalized goals—“Walking 10,000 steps a day might be difficult, but how about starting by walking to the convenience store?”—it creates the conditions for what Bandura [8] termed performance accomplishment, the most powerful source of self-efficacy. Cues to action are equally relevant: because AI conversations are available 24 hours a day, they can function as real-time prompts that encourage behavior “before lying on the sofa after work” or “in anxious moments at dawn.” This accessibility complements structural constraints of existing health promotion interventions, such as limited consultation hours and economic, geographic, or psychological barriers to counseling.

These benefits carry cognitive risks. If a user begins with incorrect health assumptions, the chatbot may reinforce them—through echo chamber effects and confirmation bias—and, combined with AI hallucinations, this may solidify erroneous beliefs. The information-to-action question shift I described carries a parallel risk: when driven by flawed premises, it may push users toward harmful behaviors rather than beneficial ones. Future AI systems should incorporate evidence-based nudges that challenge harmful assumptions rather than validate them. AI health conversations should also function as a supplement to, not a replacement for, professional medical consultation, and a human-in-the-loop approach remains critical when AI-generated advice intersects with clinical decision-making.

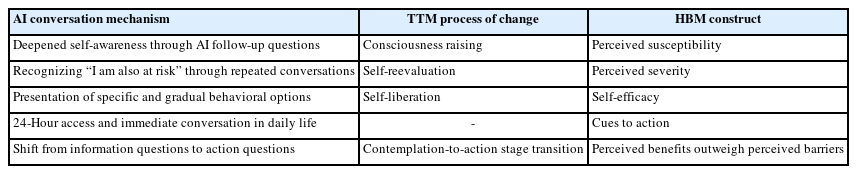

The correspondence between these AI conversation mechanisms and the constructs of the two theoretical models is summarized in Table 1.

Korean context: intersections with HP2030 (The Fifth National Health Plan)

This discussion connects directly with Korean health promotion policy. The Fifth National Health Plan (HP2030) has established health literacy enhancement as a priority task, with specific action items including the development of health literacy assessment tools, population-specific education systems, and reliable health information delivery systems [9]. The adequate health literacy level among Korean adults is currently estimated at 55%–57% [10].

Here, the health promotion potential of AI health conversations intersects with the goals of HP2030. HP2030 represents a supply-side approach—ensuring that accurate health information is provided to the public. AI health conversations represent a demand-side channel through which consumers independently explore health information and translate it into action. These approaches are complementary. If AI conversations can serve as a mediator that supports not only health information comprehension but also health behavior practice, they may broaden the concept of health literacy itself—from “information comprehension” to “behavior change.” Achieving this expansion requires cultivating critical digital health literacy—equipping users not only to access AI-generated health information but to critically evaluate and verify it against professional sources before acting on it.

Korea-specific challenges also exist. Digital literacy gaps and algorithmic biases in AI systems may create new health inequities, disproportionately affecting older adults and lower socioeconomic groups. Systems to verify the accuracy of AI-generated health information remain limited. And the risk of overreliance on AI conversations is real. These factors should be investigated before AI health conversations are promoted as a health promotion tool.

CONCLUSION

AI health conversations have already become part of mainstream health behavior. Systematic reviews and meta-analyses indicate that AI chatbot-based interventions can promote health behavior change, and these effects can be interpreted through core constructs of health promotion theory—the processes of change in TTM and self-efficacy in HBM. This phenomenon warrants study not only as a risk management issue but also as a potential new mediator of health promotion.

We see three research priorities going forward. Randomized controlled trials should test whether open-ended conversations with general-purpose LLMs influence health behavior change. Longitudinal studies should examine whether the information-to-action question shift correlates with TTM stage transitions. And Korea-specific guidelines for AI health conversations—ones that account for digital health equity and safe complementary use—should be developed in conjunction with HP2030’s health literacy goals.

Notes

AUTHOR CONTRIBUTIONS

Dr. Sanghyun AHN had full access to all of the data in the study and takes responsibility for the integrity of the data and the accuracy of the data analysis. Author reviewed this manuscript and agreed to individual contributions.

Conceptualization: SA. Investigation: SA. Writing–original draft: SA. Writing–review & editing: SA.

CONFLICTS OF INTEREST

The author is the Chief Medical Officer of Mobile Doctor Inc., which operates the Yeolnayo app in Korea, and the Medical Algorithm Director of MoDoc AI Inc., which operates the FeverCoach app in the United States.

FUNDING

None.

DATA AVAILABILITY

Not applicable. This article is a viewpoint and does not contain original research data.